Black Sabbath's (almost) last show ever

In light of Ozzy Osbourne's recent passing, I thought it’d be nice to share a story. Back in 2017, hardly a year after we released OffShoot, we received an email:

"Dear Hedge,

I'm looking at solutions for a gig I've been offered where I'd need to checksum transfer up to 15 cameras as quickly as possible. What are my options?"

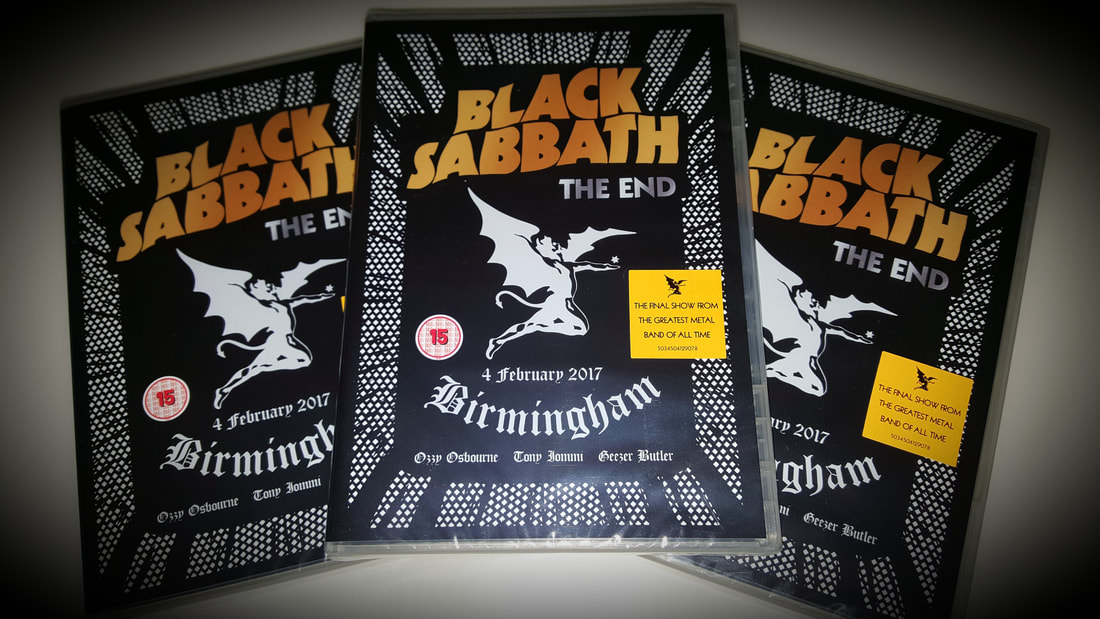

After a few emails back and forth, this production turned out to be high stakes: Black Sabbath would end their world tour with a final show back where it all began in 1968, Birmingham.

The band considered this show to be their last act. Because of that they wouldn't skimp on recording the show for a DVD release. As there would literally be no second chances, the producer was a bit anxious and requested if the Hedge team could maybe attend, just in case they needed help - a decision that ultimately wasn’t regretted. Who were we to decline such a request? After all, this was Black Sabbath 🤘

Now, this wouldn't be much of a read if this was a straightforward story about how the godfathers of heavy metal played an amazing show, recorded the whole bunch with a heap of great cameras and glass, and all went according to plan. The first thing did happen; it was an amazing show. Ozzy's voice and performance both were great, and Tommi Iommi was on fire.

The data handling? That’s where things turned into a huge and exciting challenge.

Prep

Some weeks ahead of the show, it was decided the show would be shot exclusively on Sony F5 cameras. They would be shooting HD on SxS cards, recording ProRes 422 HQ (220 Mbps). Even with such an amount of cameras, it's actually not a gigantic heap of data - one SxS card can easily record a whole show in ProRes HQ.

Mike, the show’s DIT, calculated they needed 3TB, and thus had production purchase two 4TB Thunderbolt drives. He specified they should be RAID for extra speed (RAID 0, and no, RAID IS NOT A BACKUP), while copying to two separate drives meant there was the safety net of being able to physically separate the backups. Best of both worlds 👍

To speed up offloading even more, and reduce the risk of a card dying unexpectedly, each camera would do at least one card swap. That meant we needed thirty cards - each rental cam came with two 128 GB cards, so that was sorted.

Offloading multiple cards simultaneously without a speed loss was Hedge's first innovation and the show's DIT realized early on that that feature would be of a lot of help. Besides two Thunderbolt busses, his MacBook Pro also had two USB busses, so the original plans was to stack a bunch of card readers using USB 3.0 hubs. That ensured all available bandwidth would be used at all times. At most, we’d see a throughput of around 1 TB per hour, and finish no later than 1am.

Bostin, as they say in Birmingham.

Showtime

On the day of the show, things got rather interesting… in the morning, we heard we were going to shoot in 4K!

Back then, 4K was not a standard format for live shows. DVDs weren't large enough for 4K concert videos, you needed Blu-ray for that. 4K televisions were only just starting to become affordable. However, Black Sabbath wanted their last show to be future proof - a decision that (still) makes total sense.

Back then, recording 4K on high-end cameras typically meant needing an external recorder. However, Sony had just released firmware update that made it possible to to output 4K over SDI (for the live visuals) while at the same time recording XAVC 4K on internal SxS Pro+ cards. However, using XAVC 4K with a bitrate of 480 Mbps meant two things:

We needed new cards; our SxS cards didn’t support this codec’s bitrate.

We needed a lot of them! One SxS Pro+ card would max out at 25 minutes of recording.

Runners were sent out in a frenzy to rental houses across the UK to find more cards. All in all, we managed to source a total of sixty cards within hours!

Back to our spreadsheet: a two-hour show meant we’d receive six full cards per cam, which was quite a bit more than then four we had. That meant we’d have to rotate at least two cards per camera back into production right after offloading them.

That meant two things: the DPs had to coordinate with the director when to do a card swap, to ensure there was always a sufficient amount of cameras rolling. And before the fourth card would fill up, card 1 would need to have been offloaded and brought back to the cam. Scary 😱

First, we calculated the amount of terabytes we'd need:

6 cards x 250 GB x 15 cams x 2 backups = 45 TB.

We had 8 TB…

Worse, based on test recordings done in the afternoon, offloading all these cards would likely finish late in the evening of the following day. That turned out to be the real problem - those cards needed to be back at the rental houses before the next morning to prevent getting billed a second day.

Divide and conquer

So, we had a bit of a problem at hand. How could we finish offloading all those cards well before 8am? We clearly needed more gear, but at that time, it was already past noon on a Saturday so we knew our options would be limited.

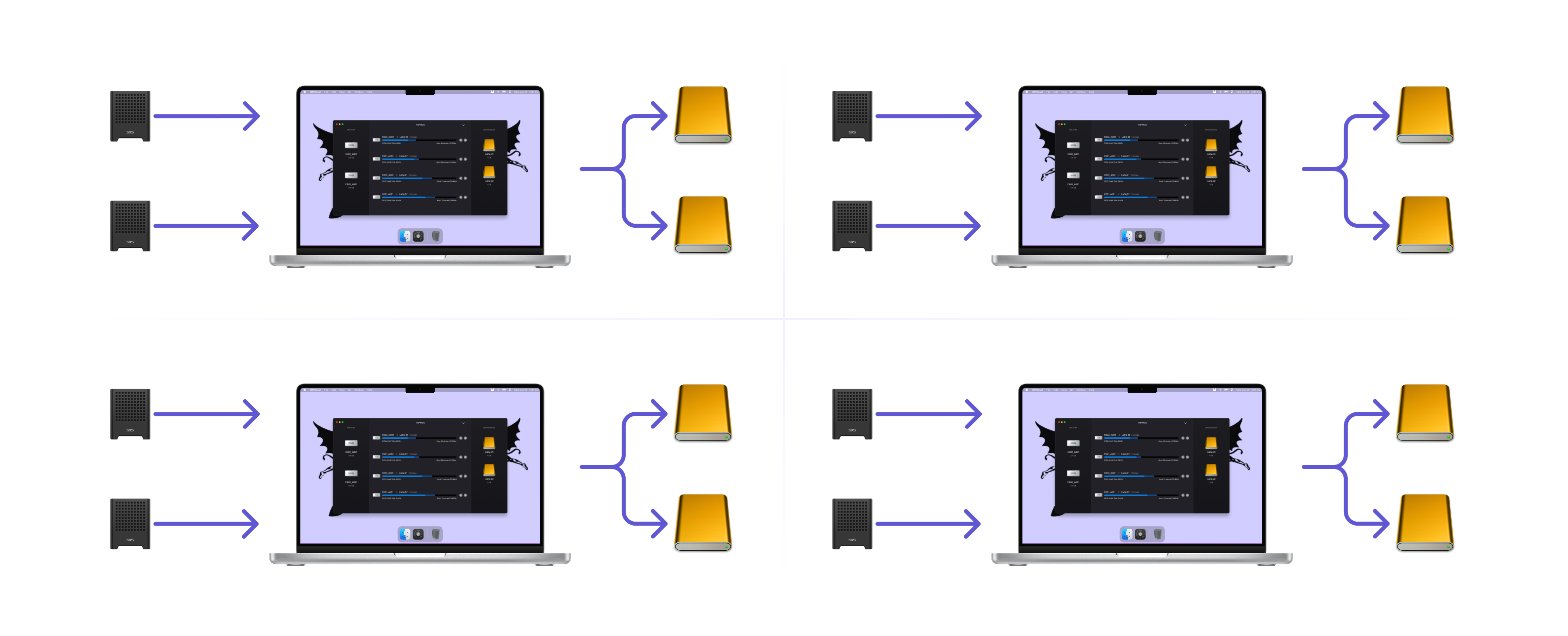

We calculated that our current setup could finish offloading 22 cards before 8am. That was around a quarter of the amount of cards we’d fill. The math was simple: we had to quadruple our setup. After all, it's easier to scale out than to try to improve a single setup. I had brought an extra MacBook Pro, and so had Mike and a colleague, so that was quickly settled.

A runner was sent out to buy more drives. Due to the time of day, the local Apple store was ultimately the only viable option. The fastest thing they had were LaCie Thunderbolt RAID drives, and we cleaned out their stock. We now had 12 Thunderbolt drives, 4 laptops, 8 card readers, 60 cards, and a very, very long night ahead of us.

Showtime

The show went swimmingly; this producer did a great job by understanding the difficulties we were facing and had runners reassigned to help with card swaps during the show. Mike was managing incoming cards, and I was operating our 4 offload stations. All in all we had a busy but pretty much stress-free workflow.

When the show ended we continued to offloading while all around us the breakdown commenced. When the rental company needed their tables and power cables back, we ultimately relocated to a hotel nearby, and we finished neatly at first light. The hotel staff was very understanding and even instructed the breakfast shift to open the bar for us when we would finish, so we could have a proper sit down when all was done 🍻

That morning, the cards were returned to the rental houses, and the LaCie drives shipped to the post production house. They had planned to start working on the show on Monday morning, but due to having to store the footage across a dozen drives, they had to delay that by another day until they had ingested all those clips.

Throughput

To me, the great thing about this production was that a massive creative decision was made and immediately everyone involved got behind it. Everybody understood this was an opportunity not to be missed.

That said, the amount of headaches that could have been avoided were huge. If this decision would have been made only a single day before, we’d have a completely different show:

Production could have purchased or rented two 8-drive RAIDs like a G-SPEED Shuttle XL for a lot less money than they now spent on separate drives.

Runners would have been available for what they were meant to be doing.

There would have been zero overtime - we’d have finished before 1am.

All people involved would have had a proper night of sleep, in their own beds.

The post house could have started editing straight away.

What a lot of productions run into, this one not being an exception, is that teams often think about the amount of terabytes needed to be backed up. In the times of HDDs, it was fine to talk about 300 MB/s because things would never go faster anyway. That's a long time ago. Today, we should be talking how many terabytes we need to move from one drive onto another. It’s about the total throughput, not a drive’s top speed.

To Mike’s credit, he did upfront calculations to understand how long it would take him, but that’s just because he’s a professional. It should be an integral part of production planning too. We shouldn't be talking about capacity (TB) or speed (Mb/s), but about throughput - measured in terabytes per hour.

After all, time's our scarcest resource - not terabytes.

Black Sabbath - The End Live in Birmingham